Artificial Imagination and Fiction After Cinema – SXSW, Austin

This conference examines how AI, beyond cost reduction and visual effects, inaugurates a post-photographic rupture. Cinema’s factuality—rooted in photographic capture of what was—confronts AI’s counterfactuality: statistical generation of what could be, freed from indexical constraints. After metabolizing cinematic language, AI opens unprecedented fictional territories: evolving generative narratives, multiversal storytelling, dissolution of boundaries between creation and reception. We explore these new fictions that transcend filming constraints and classical narration, revealing how counterfactual generation catalyzes an anthropological mutation of our fictional regimes, and a means of resistance to the dissolution of common truth.

ARTIFICIAL IMAGINATION AND FICTION AFTER CINEMA — SXSW / Villa Albertine

My name is Grégory Chatonsky; I’m a French artist. I hope my accent won’t bother you and that you will find it charming. I want to thank Villa Albertine for this invitation.

2

I’ve been using AI — that is, neural networks — since 2009. I was able to start early thanks to a research grant from Quebec when I was a professor in Montreal. At that time, there was no ready-to-use software, only fragments of code. You really had to be a specialist in computer science to get any result. We could only generate alphanumeric characters.

3

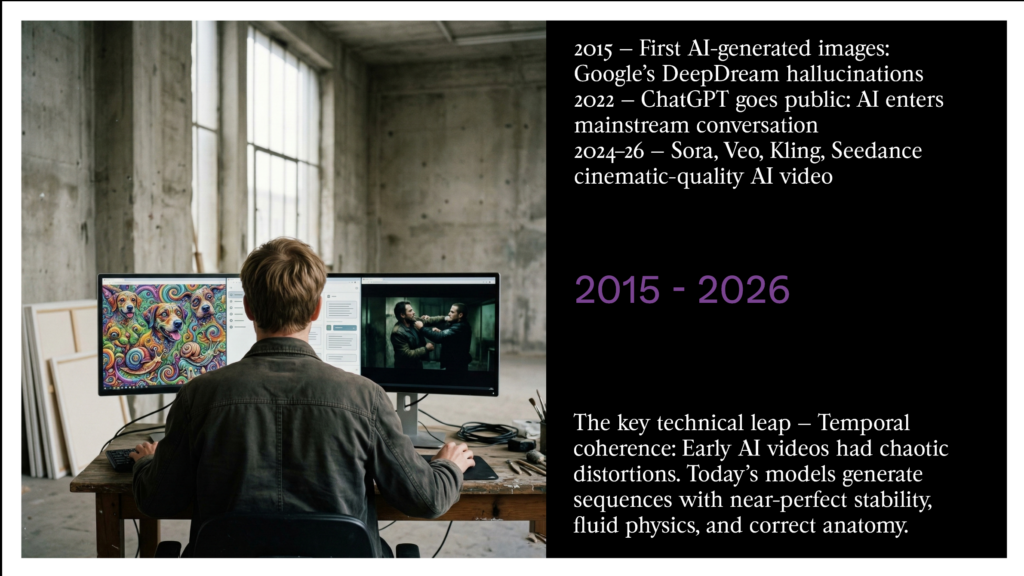

In 2015, the first images appeared with the DeepDream hallucinations developed by a Google engineer. In 2022, with ChatGPT, people began explaining to me what AI is, while before they had seen me as some kind of visionary geek artist.

Since then, I’ve been watching the faster and faster evolution of AI with amusement and distance. Behind the speed of innovation, there are some deep historical questions.

4

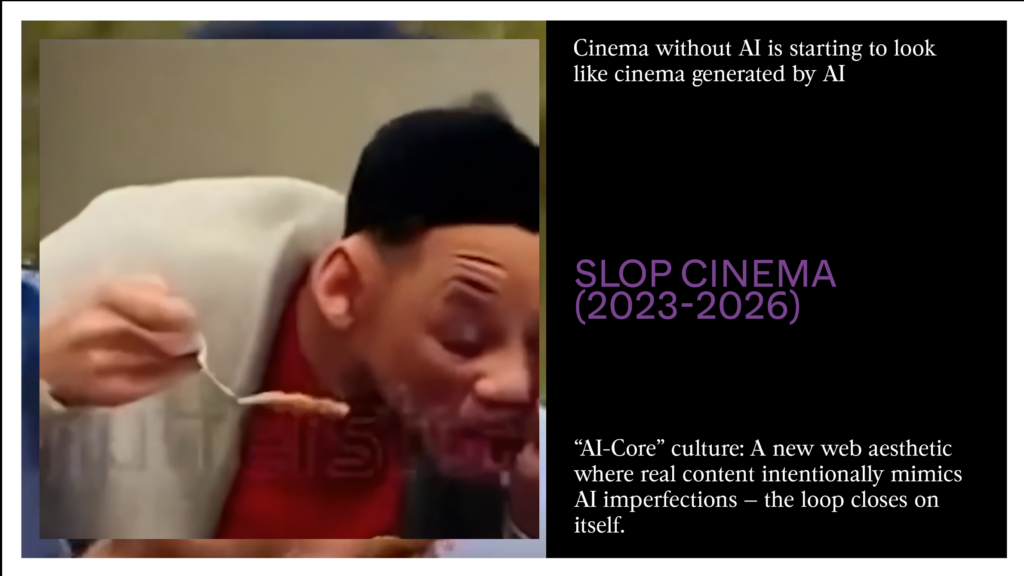

SLOP CINEMA

Between 2024 and 2026, AI went from being a “glitchy” curiosity to a film production engine that frightens the Hollywood industry. This shift happened around time consistency: where the earliest videos had chaotic distortions, current models like Sora, Veo, Kling, and Seedance generate sequences of stunning stability.

The most famous example is the evolution of the “Will Smith Spaghetti” meme. In 2023, the video was a visual nightmare. By 2026, the new versions reach near-total visual realism — to the point where Smith filmed himself eating spaghetti. Reality now takes inspiration from AI.

In parallel, web culture has seen the rise of the “IA-Core” movement, in which reality imitates the imperfections of AI.

5

We’ve all seen that sequence between Tom Cruise and Brad Pitt. It’s so realistic! But are we sure about what reality is? Why do we link realism to cinema? And if we keep watching this fight again and again, what do we see? Two aging actors fighting, again and again? Is it an accident that Hollywood cinema in general has become its own copy, repeating the same empty sequences over and over, much as an AI would? Cinema without AI looks more and more like AI-generated cinema.

6

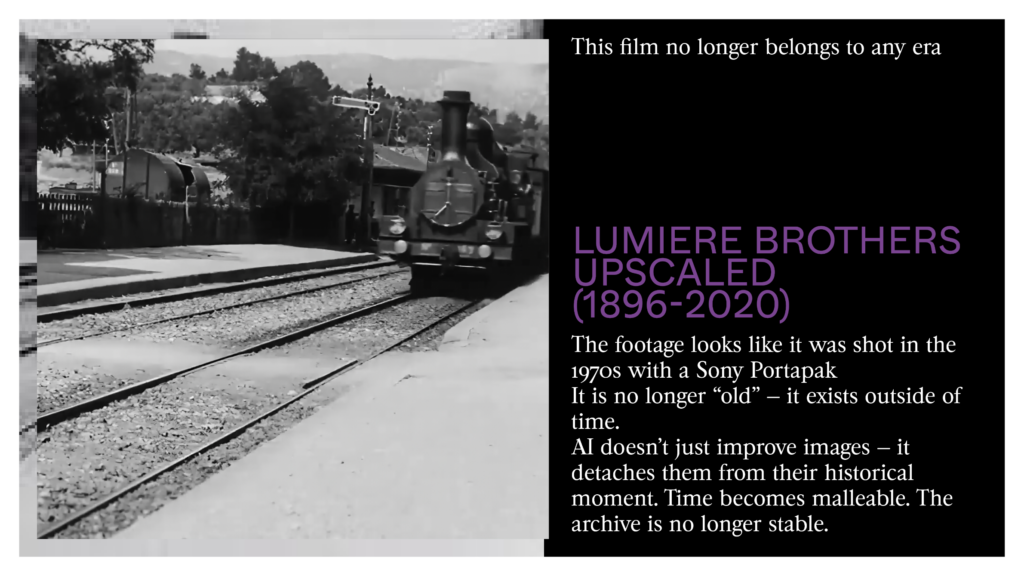

Now, when we watch this upscaled sequence by the Lumière Brothers. We have a strange feeling watching this old sequence. It no longer belongs to any era. It almost looks like a video shot in the 1970s with a Sony Portapak.

Beyond the naive enthusiasm caused by the realism of AI-generated sequences, we must ask ourselves: why is this happening now? At a moment when a large part of cinema has become its own shadow. Should AI simply become slop cinema? A cinema that cuts costs and repeats the same endless fight between Pitt and Cruise over and over? If classical cinema can be replaced, it may be because it already only exists as its own ghost.

7

THE MEMORY OF THE POSSIBLE

Of course, cinema isn’t reality. The trace of light is only one form of realism. We confuse it with reality because it was the main one in the twentieth century. If AI absorbs this visual realism, it doesn’t copy it — so it doesn’t simply repeat the kind of story specific to cinema built on step-by-step action.

8

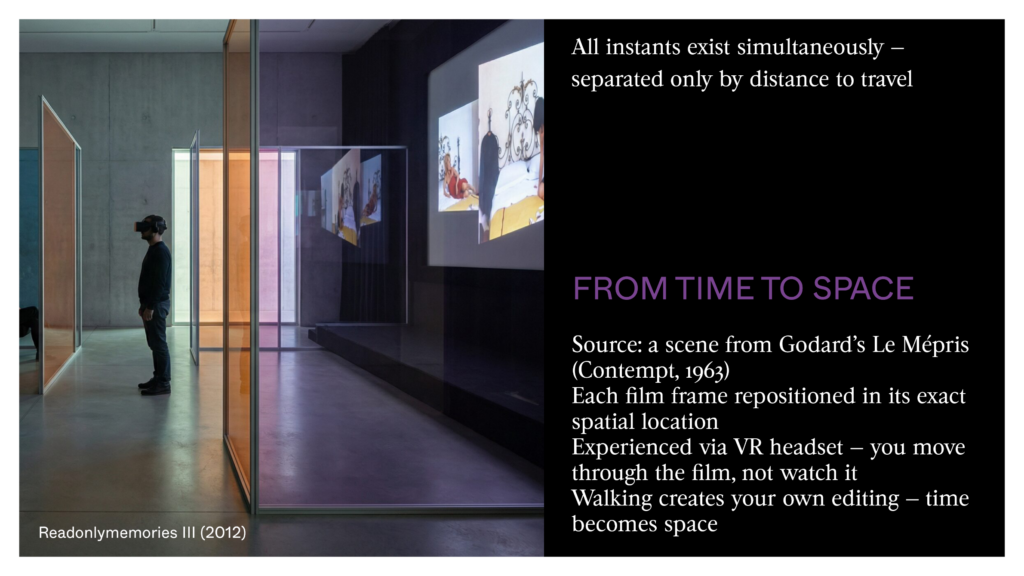

In the early 2000s, I came back to classical cinema to translate its time into space. I reworked a scene from Godard’s Le Mépris — placing each shot back in its exact location. You can enter this space by a virtual reality headset.

9

By moving, you can create your own editing. All moments exist at the same time — only separated by a distance to cover. It’s this drift through space that creates time here. You understand that cinema time was only a spatial convention in disguise. By making all moments of a film exist at once, space shows that the step-by-step story was a choice — not a necessity. And that AI could invent other ways.

10

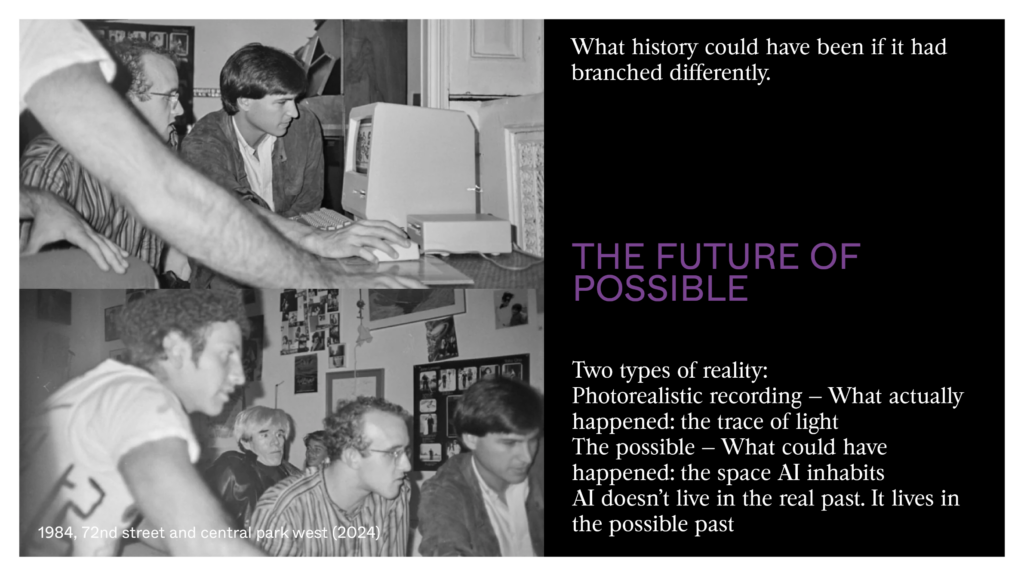

We don’t go back to cinema’s time but to its spaces, as at a crime scene. We go back, for instance, to 1984, when Steve Jobs was showing the first Mac to a group of artists.

11

There’s in these images and in this generated song a strange feeling of loss — not for the factual past, but for a past that never exist. There’s another reality beyond the recording of visual light — the reality of the possible. This nostalgia isn’t sentimental: it points toward an alternative reality that didn’t happen but could’ve. That’s exactly the space AI lives in — not the real past, but the possible past, what history could’ve been if it had taken a different path.

12

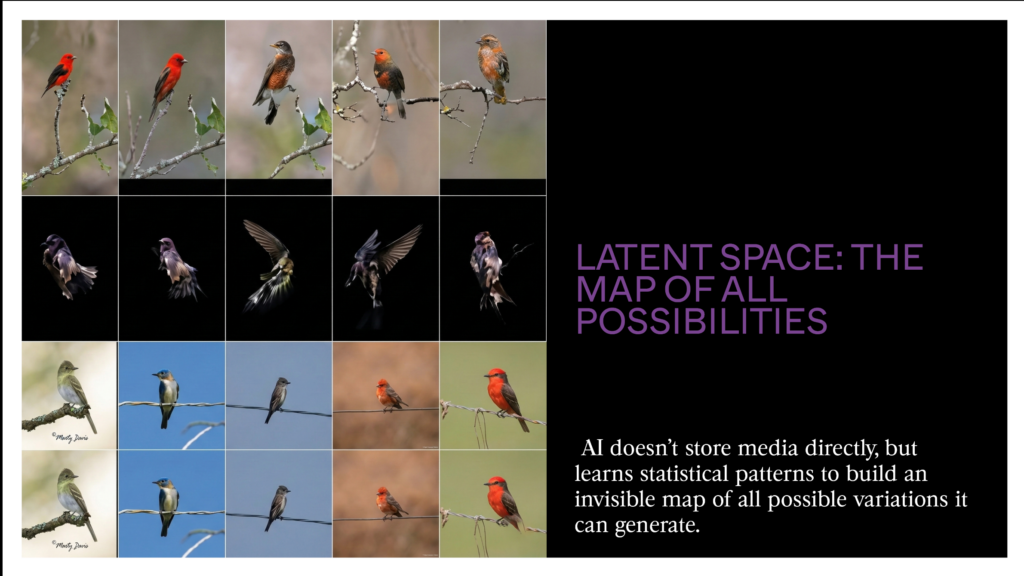

With AI, there’s a latent space that holds the memory of all the possibilities we can generate.

In this space, there are no photos, videos, or sounds, no discrete medias — only vectors that make it possible to produce documents that don’t exist but feel real for us. Suppose we show 5,000 images of birds to AI. It doesn’t store images one by one.

What it learns are the statistical links: that a thin beak often goes with long wings, that red feathers rarely appear on a water bird. At the end of this learning, it has an invisible map where each point stands for a possible bird.

13

Between these points, there are millions of other positions — birds that have never existed, but that follow all the rules the 5,000 real ones.

AI can identify as a bird something that isn’t part of its training data; it has an artificial way of seeing. It can also create birds that are believable but non-existent.

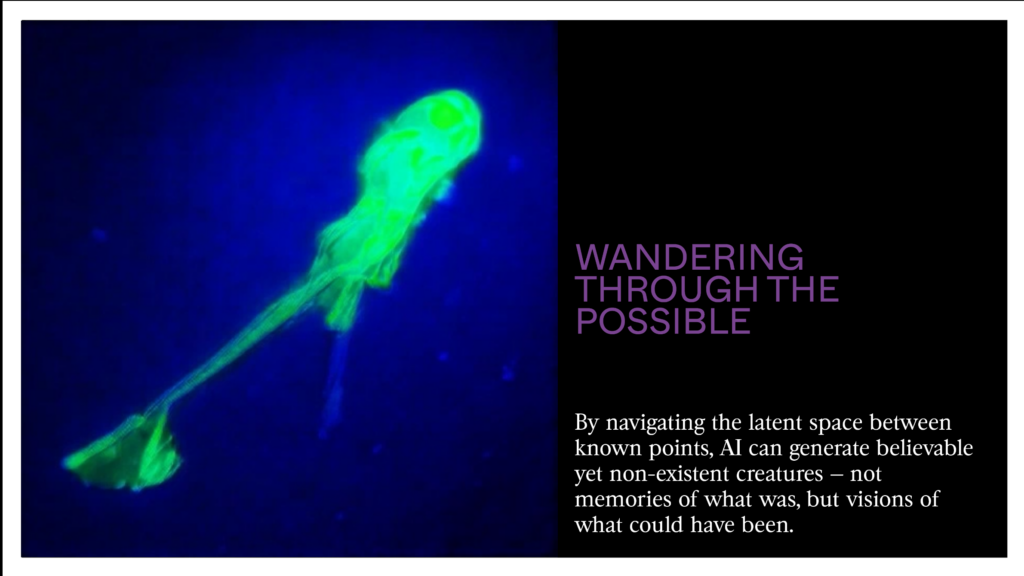

When I drift through this latent space, I wander between these positions. I can ask: what lies halfway between a flamingo and a crow? The machine doesn’t search its memory — it calculates a middle position on its map and generates the bird that lives there. This bird has never existed. But it is believable, coherent, possible. That is the memory of the possible: not what has been, but everything that could’ve been if nature had taken a different path.

14

This is a new kind of memory.

Let me try to explain:

the first memory is when we hear a musical note in the present moment of perception.

The second memory is when we listen several notes that we remember and expect. We then hear something that doesn’t really exist: a melody.

The third memory is when we record this music on a physical support and can listen to it again and again. The industrial age multiplied these third memories all the way to the Web, where we were flooded by our own memory.

The fourth memory is AI, which takes in all these physical memories to create new memories that have never taken place but look like them. AI is a memory that looks like us and keeps going — but without us. It’s the memory of everything that hasn’t existed, of all the possible.

15

As you know, many films were never made:

Stanley Kubrick’s Napoleon,

Alfred Hitchcock’s Kaleidoscope,

Jodorowsky’s Dune,

Or David Lynch’s Ronnie Rocket.

Perhaps true cinema hasn’t yet happened, for lack of means.

16

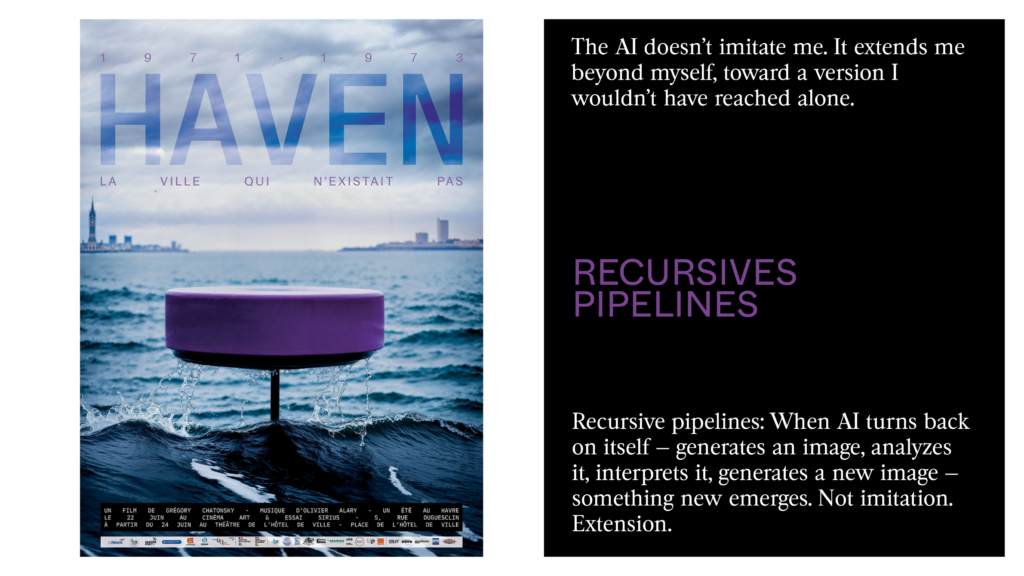

Before showing you a short clip of this recent 35-minute project , let me explain how I made it.

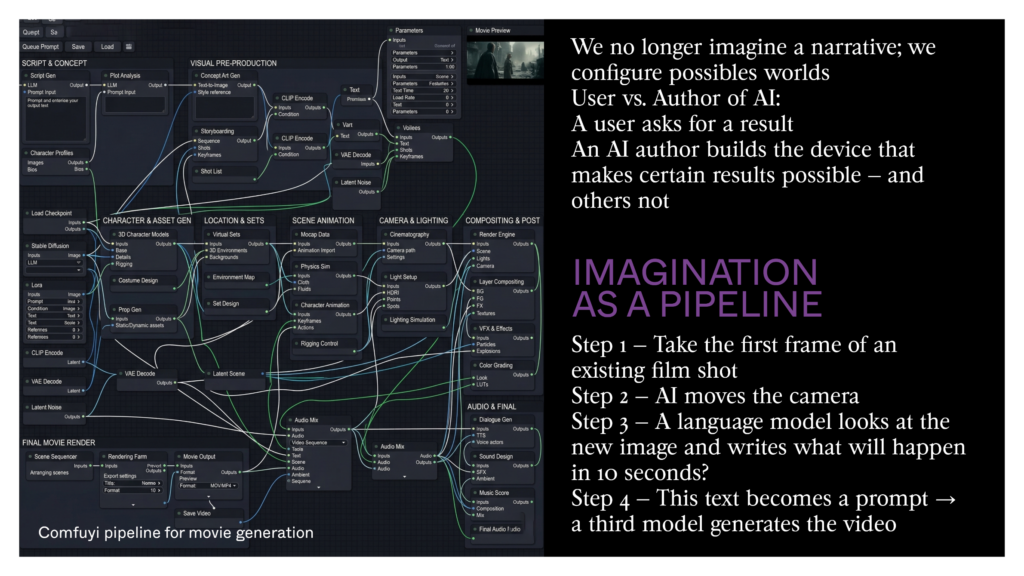

I wrote a small Python script that records the first image of each shot. I automatically changed the camera position by regenerating the image. I asked an LLM to predict or hallucinate the continuation for each image. Each of these texts served as a prompt to generate a video.

I didn’t write the story. The story came out on its own, and I was its first viewer: the characters seem lost and are waiting for the action to begin.

17

18

IMAGINATION AS PIPELINE

You understand: AI can bring back the dead body of cinema, but that would be slop cinema — cinema about cinema until nausea. To get out of the exhaustion of this loop, we must transform the link between fiction and story, because our reality is no longer the same as the one the Lumière Brothers showed with the train arriving at La Ciotat station.

The video was made with ComfyUI, which some of you know. It’s an open-source software that allows you to create connections between different AI nodes. What I described to you is a pipeline: we no longer imagines a story; we builds a world.

In all of this, I haven’t written a single line of script. But I choose the tools, adjusted the noise and the settings, decided when to restart and when to keep. That is the difference between a user and an AI author: the user asks for a result; the author builds the system that makes certain results possible and others not.

My idea is that these technical pipelines deeply change imaginatio. It’s an imagination that is no longer only human. There’s something human, of course, but there’s something else: something that looks like us, something that dreams us and sees visions of us, something that remembers us as we’ve never been.

19

How can I share my emotion when I do this — when I drift for nights through this latent space where there’s me, and there’s something other than me?

In 2019, I wrote the novel Internes — what I believe to be the first novel co-written with an AI in French language. I used GPT-2, the ancestor of ChatGPT, when OpenAI was still a non-profit and shared its software as open source. I had a very vague idea of the book I wanted to write. I began a sentence without finishing it. I asked GPT-2 to suggest continuations. I chose the one that made me want to go on. I wrote the next part. Then GPT-2 continued, and so on. In the end, I no longer knew what I had imagined and what the machine had imagined. It wasn’t only GPT-2 that completed my sentences — it was also me who completed the texts of GPT-2. In the end, I don’t know who wrote this book, but I recognize myself. It’s a book that looks like me — that looks even more like me than me alone. In this book we follow a human being who dies and sees visions of his life without being able to tell it apart from everything he’ve lived. He is placed inside an AI that watches the Earth disappear. It becomes a virus moving through space, taking advantage of the destruction of planets. It’s a mind that wants to survive and who suffers, moving from one body to another. This book doesn’t document a collaboration: it shows that imagination has no fixed owner. If I no longer know what I wrote and what the machine completed, it is because the line between the two was already not where we thought — long before generative AI. Imagination is no longer only human. We dream with machines of another imagination that would tell the story of the possible. For an artist like me, this means I’m no longer the only author of my work.

20

I can only show you a short clip from this film²² made three years ago. To simplify the production process: I asked an AI to build a story by giving it images at random. The software reorganized the images, created a script, voices, texts, and videos. Of course, I had trained a local AI — the result was surprising. I had not really imagined it, but it looked like what I’d have wanted to do. It is me and it isn’t me. I watch myself from outside.

Technical pipelines change our imagination when they’re recursive — when they turn back on themselves: an AI creates an image and studies it to build its own reading and produce a new image. The fact that the result looks like what I’d have wanted to do without having done it is perhaps the most unsettling proof: AI doesn’t copy me — it extends me beyond myself.

21

22

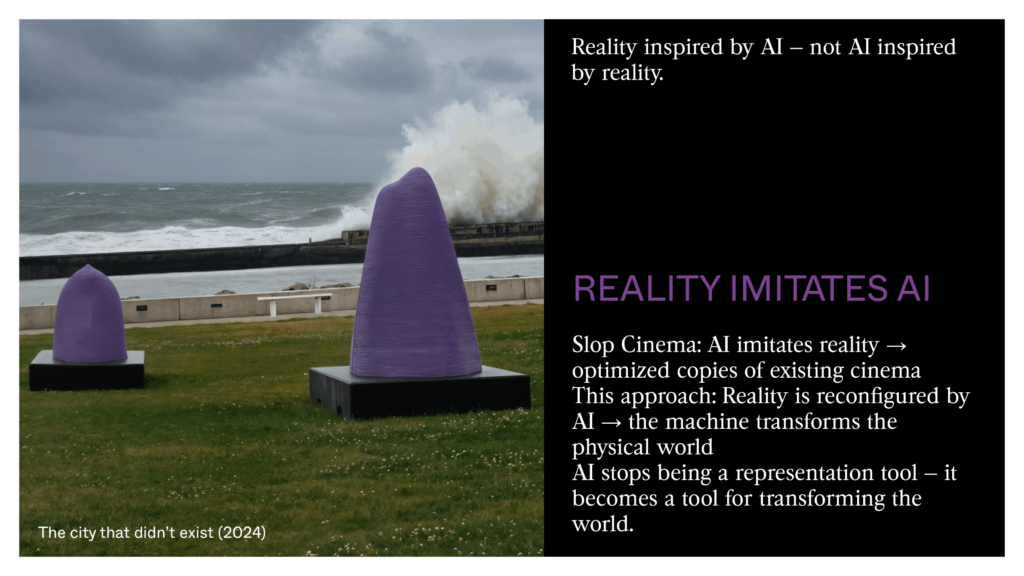

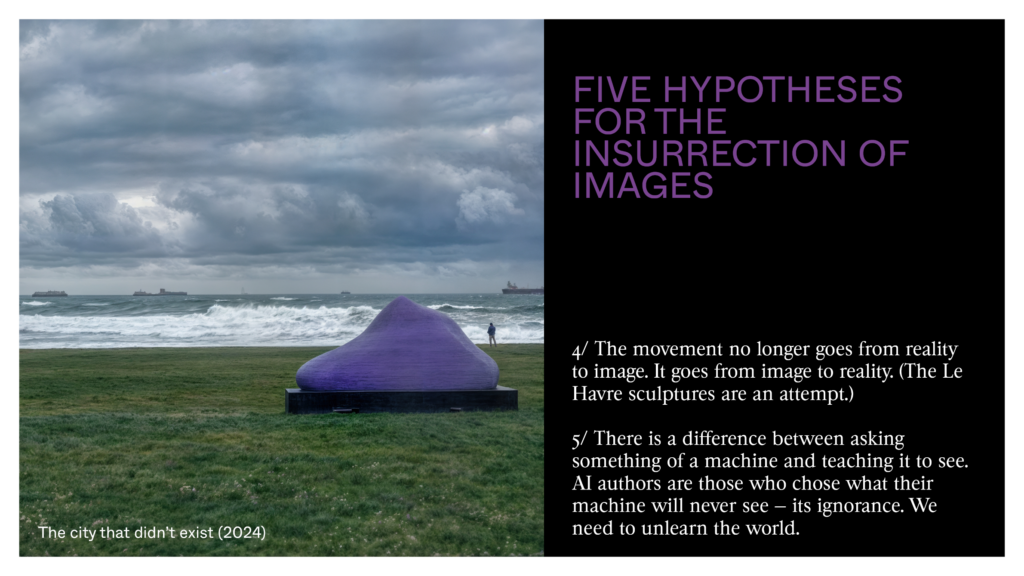

I was inspired by this film to create sculptures of several tons that were 3D-printed in concrete and placed all over the city of Le Havre. Human imagination isn’t always the starting point. Sometimes it continues something that wasn’t entirely human. In slop cinema, AI draws inspiration from reality. I try to do the opposite: to have reality draw inspiration from AI. This shift — from the generated image to concrete of several tons — turns the logic of slop cinema upside down. Reality no longer feeds the machine; the machine reshapes the real. AI is not a tool of representation but becomes a tool for changing the physical world.

23

AN INHUMAN SKULL

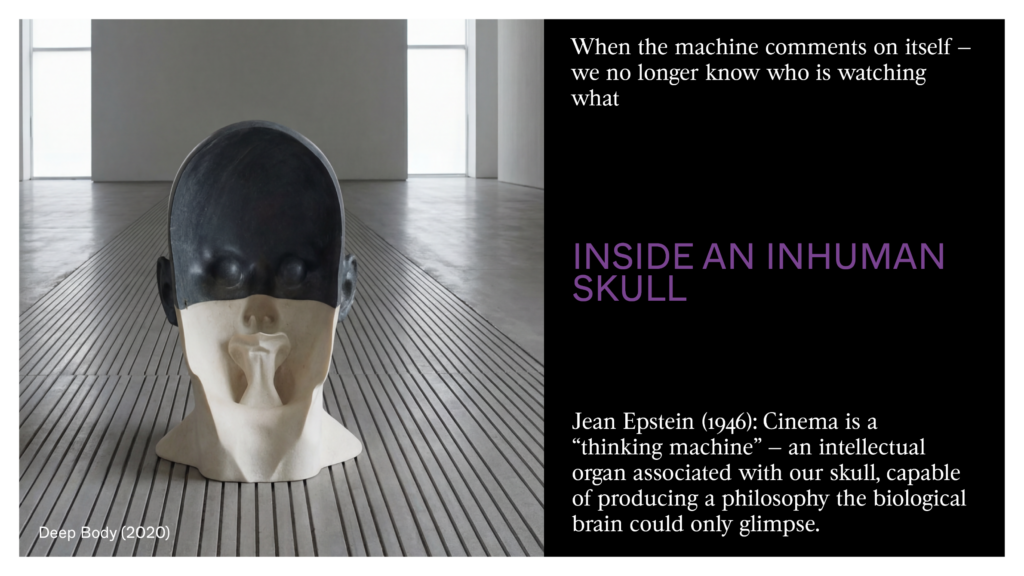

Jean Epstein wrote in 1946 that cinema was a ‘thinking machine’: an intellectual organ able to produce a kind of thinking that the biological brain could only glimpse. For him, the machine didn’t copy sight; it created a different kind of inner life.

Today, AI doesn’t merely simulate the grain of film or the flow of motion; it brings back a cinema that has long stayed like a ghost — an unrealized cinema stuck inside the limits of chemical film recording. The Surrealists wanted to bypass the conscious mind to reach something deeper. AI does the same thing, but with statistics. It isn’t the extension of the camera but the bringing back to life of all the films that could’ve been — that ‘cinema of the unreal’ Epstein called for when he saw in slow motion or the reversal of time a break with human reason.

In this process, AI ends up commenting on itself. It’s no longer a passive tool but an agent that creates its own workflow, its own protocol of creation. When the machine comments on itself, something strange happens — we no longer quite know who’s looking at what. We’re no longer in front of a projection but face to face with a presence that seems to guide us through the paths of its own hidden depth.

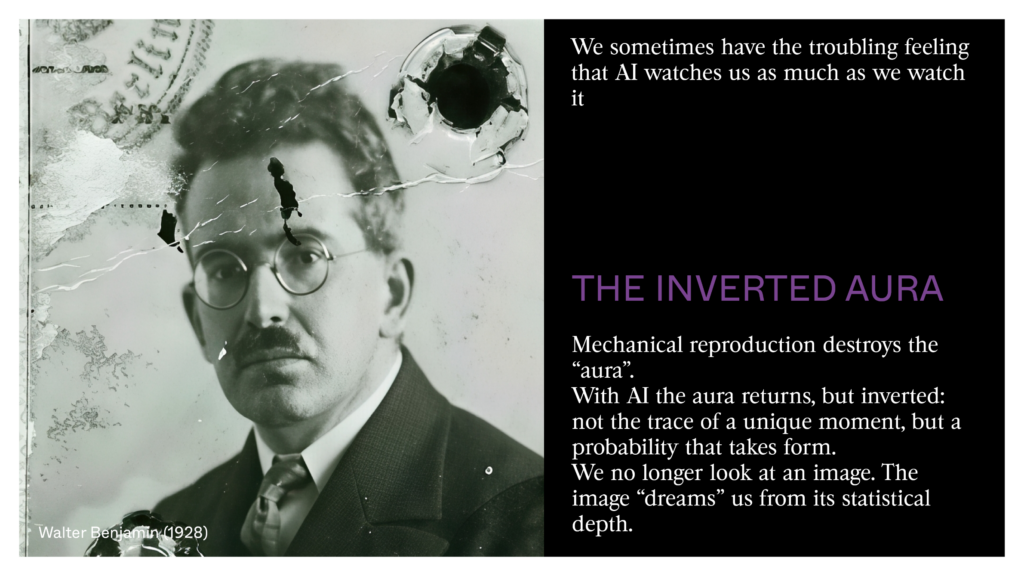

24

It is here that a brand new thing appears: the return of the ‘aura,’ but an inverted aura. Walter Benjamin saw in mechanical copying the end of the unity of the work. AI constantly generates the unique, and in this movement one sometimes has the feeling that it watches us as much as we watch it. Something in this inhuman skull made of silicon and vectors seems suddenly to fix its gaze on us. It isn’t the gaze of an actor captured fifty years ago; it’s the gaze of a probability taking form. We’re no longer looking at an image — it’s the image that ‘dreams us’.

What troubles us about AI isn’t that it looks like life. It is that it looks like our memory — but a memory that would keep going without our body. Classical cinema was a cave where shadows born of the world’s light were shown, a trace of the past. AI, in contrast, doesn’t truly record the outside world. It’s a look inward at human culture, a processing of all our traces. It isn’t a window open onto reality, but a human memory that would keep working in our absence.

25

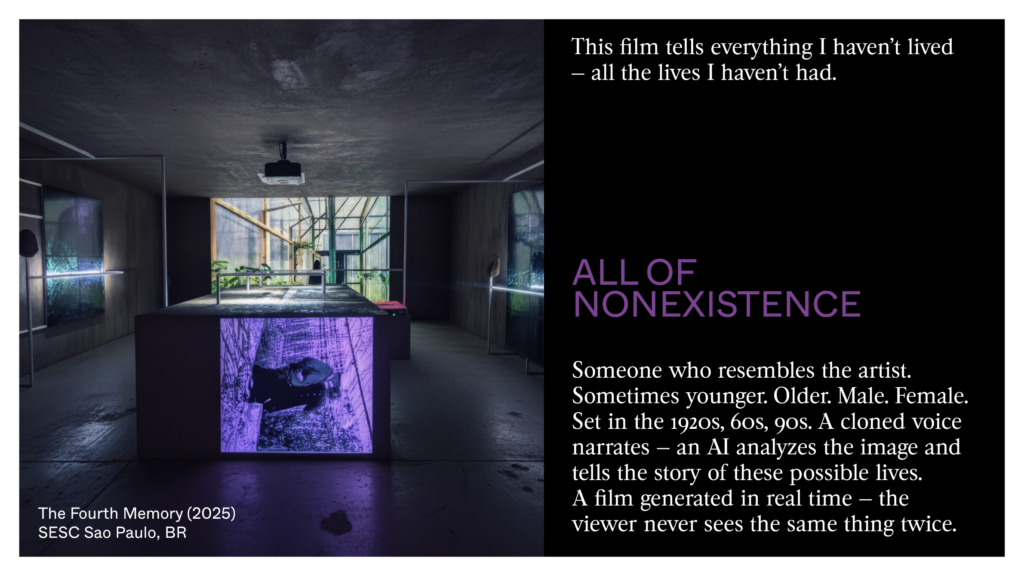

With the installation “The Fourth Memory” I created my own tomb when I’m still alive. It takes the form of a sculpture where my body is carved, with various photos on the walls showing data centers on a planet left behind by human beings. We see a film generated in real time: the viewer will never see the same thing twice. The moment they’re watching is unique and erases itself as it is created. The viewer becomes its only memory. We see someone who looks like me — sometimes younger, older, sometimes male, female, sometimes white or Black, sometimes placed in the 1920s, the 1960s, or the 1990s. This film tells the story of everything I haven’t lived, all the lives I haven’t lived. It isn’t a egocentric monument to my real life, but to my possible lives, to what looks like me but isn’t me. We listen my cloned voice: an AI studies the image and tries to tell the story of these lives. This film isn’t a film, because it has no end. It is always similar but never repeats itself. We exit the historical loop in which the twentieth century had trapped us.

26

27

What I mean is that if humanity were to disappear, these neural networks could keep generating fictions, faces, and landscapes, endlessly looking at all the combinations of what we could’ve been. AI is the monument to our possible absence — our final tomb, a living archive that doesn’t preserve the past but makes it grow and spread into a future with no witness. In this ‘inhuman skull,’ cinema is no longer an art of light, but a science of what stays, in which every image would be the sign that our imagination has learned to do without us.

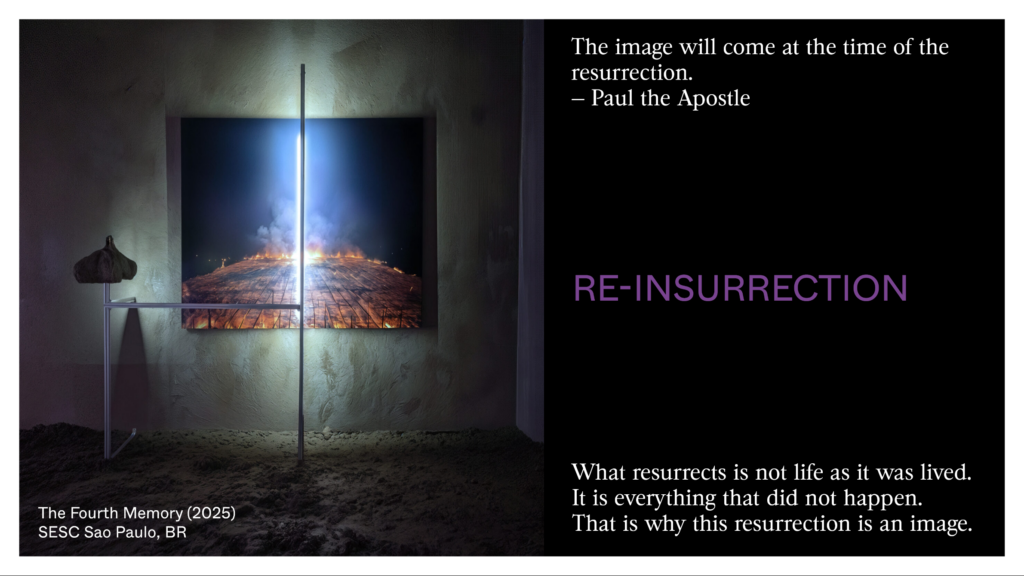

28

Perhaps AI, rather than reproduce the realism of a battle between Cruise and Pitt, could bring back the unrealized dreams of cinema so that the promise of cinema might finally be kept. The Apostle Paul has this strange phrase whose meaning we may begin to understand today: ‘The image will come at the time of resurrection.’ But what comes back isn’t lives as they’ve been lived. It is everything that hasn’t taken place — and that’s why this resurrection can only be an image.

29

TOWARD A POLITICS OF AI AUTHORS

We must already end, because if we continue we would need to do so for hours.

This was only an opening to what these generated images could become if they don’t blindly copy the model of the last century. It’s a youthful mistake of all media to copy those who came before. Cinema initially tried to copy painting and theater. Then filmmakers began to invent something of their own.

In the fifties, the critics at Cahiers du Cinéma invented a simple but powerful idea: behind a film there’s an author — the director — and this author has a vision that can be seen from film to film, no matter the studio, the budget. This idea gave power to filmmakers over producers. Today, the danger is that AI becomes the opposite: an anonymous, standard production tool run by platforms that have no view of the world other than profit. An AI author isn’t someone who writes good prompts — anyone can learn to do that. It’s someone who chooses what the machine learns, what it’ll never see, in what context it works. That’s what will be recognized, in twenty years, as a body of work — not the prompt, but what the artist refused to show to the machine.

30

Several of us in France and elsewhere believe in the rise of a new era of images. Several of us believe that film storytelling is exhausted. Because, it always tells the same story: the strip of film passing through the projector. A time that turns into destiny for human beings. A time that always unfolds in the same way — and that traps us. With AI, we can move from fiction in time to fiction in space — to drift through a latent space of the possible, where several realities exist at once.

This new imagination doesn’t yet exist. It remains to be made.

Several of us are hoping for this uprising of images and believe in the rise of new authors: AI authors. Just as in the fifties in France there was the Nouvelle Vague and a « politique des auteurs », we believe that a new authors politics is possible and will give power to artists over the commercial platforms that are destroying the planet and working with the worst political actors.

31

We’re several. There are two others in this room who share, I hope, this future: Jules Rimbaud and Fabio Trotabas. We’re part of a project in Limousin, in the center of France, started by Fabien Giraud and Anne Stenne. This project is called The Feral.

We’ve bought a mountain where we’re setting up a local AI that will only know this small part of the world. It’s like a wild child who doesn’t yet know the human world. This child isn’t human. Artists are invited each year to teach it things, to become one of its parents by creating a dataset.

32

The natural landscape is being changed by this AI, and we’re in the process of building at the top of this hill a production and post-production stage for this new cinema. We’re also working with Ida Soulard to create a school to help this new generation of AI authors to emerge. These new authors won’t only need to master these technologies to bring their ideas to life — their imaginations will also need to be changed by the pipelines of these AIs. These authors will have to see AI not as a means but as a medium for the uprising of images over reality. They won’t be prompt technicians but explorers of the latent future. They’ll have to be able to work with AI as if they could listen the difference between their own voice as it rings inside their skull through their bones, and their voice as others listen it. They’ll have to be able to imagine together something that doesn’t yet exist — as we’ve done together during this talk, making each one of us one of these authors of a new kind.

33

Before I leave, I want to set out five hypothesis — not conclusions, but ideas I’ve gathered by drifting through these latent spaces.

First idea: AI isn’t a tool. A tool is something you use. AI changes the person who uses it. It transforms imagination from the inside.

Second idea: we’re moving from a cinema of time to a fiction of space. The story no longer unfolds — it opens up. We no longer follow a character toward their destiny; we drift through a territory of possibilities.

Third idea: AI doesn’t remember the past. It generates everything that could’ve taken place. It’s a memory of a new kind — not what has been, but what could’ve been.

34

Fourth idea: the movement no longer goes from reality toward the image. It goes from the image toward reality. The sculptures in Le Havre are one attempt at this.

Fifth idea: there’s a difference between someone who asks something of a machine and someone who teaches it to see. The AI authors will be those who have chosen what their machine will never see — that is to say, its ignorance.

35

That is an abrupt ending, with a lot of questions, but I hope I’ve been able to share my emotion when I dive into these latent spaces, and my hope that new images will finally be born in this era that doesn’t want to end.